BGP on Minikube

In this tutorial, we’ll set up some BGP routers in Minikube, configure MetalLB to use them, and create some load-balanced services. We’ll be able to inspect the state of the BGP routers, and see that they reflect the intent that we expressed in Kubernetes.

Because this will be a simulated environment inside Minikube, this setup only lets you inspect the routers’s state and see what it would do in a real deployment. Once you’ve experimented in this setting and are ready to set up MetalLB on a real cluster, refer to the [installation guide]() for instructions.

Here is the outline of what we’re going to do:

- Set up a Minikube cluster,

- Set up test BGP routers that we can inspect in subsequent steps,

- Install MetalLB on the cluster,

- Configure MetalLB to peer with our test BGP routers, and give it some IP addresses to manage,

- Create a load-balanced service, and observe how MetalLB sets it up,

- Change MetalLB’s configuration, and fix a bad configuration,

- Tear down the playground.

This tutorial currently only works on amd64 (aka x86_64) systems, because the test BGP router container image doesn’t work on other platforms yet.

Set up a Minikube cluster

If you don’t already have a Minikube cluster set up, follow

the

instructions on

kubernetes.io to install Minikube and get your playground cluster

running. Once you’ve done so, you should be able to run minikube

status and get output that looks something like this:

minikube: Running

cluster: Running

kubectl: Correctly Configured: pointing to minikube-vm at 192.168.99.100

You need to use Minikube >=0.24. Previous versions use a different IP range for cluster services, which is incompatible with the manifests used in this tutorial.

Set up a BGP routers

MetalLB exposes load-balanced services using the BGP routing protocol, so we need a BGP router to talk to. In a production cluster, this would be set up as a dedicated hardware router (e.g. an Ubiquiti EdgeRouter), or a soft router using open-source software (e.g. a Linux machine running the BIRD routing suite).

For this tutorial, we’ll deploy a pod inside minikube that runs both the BIRD, Quagga and GoBGP software BGP routers. They will be configured to speak BGP, but won’t configure Linux to forward traffic based on the data they receive. Instead, we’ll just inspect that data to see what a real router would do.

BIRD is temporarily disabled due to a bug, so screenshots will look slightly different.

Deploy these test routers with kubectl:

kubectl apply -f https://raw.githubusercontent.com/google/metallb/v0.3.1/manifests/test-bgp-router.yaml

This will create a deployment for our BGP routers, as well as four

cluster-internal services. Wait for the router pod to start, by

running kubectl get pods -n metallb-system until you see the

test-bgp-router pod in the Running state:

NAME READY STATUS RESTARTS AGE

test-bgp-router-57fb7c798f-xccv8 1/1 Running 0 3m

(your pod will have a slightly different name, because the suffix is randomly generated by the deployment – this is fine.)

In addition to the router pod, the test-bgp-router.yaml manifest

created four cluster-internal services:

- The

test-bgp-router-birdservice exposes the BIRD BGP router at10.96.0.100, so that we have a stable IP address for MetalLB to talk to. - Similarly, the

test-bgp-router-quaggaservice exposes the Quagga router at10.96.0.101. - The

test-bgp-router-gobgpservice exposes, you guessed it, the GoBGP router at 10.96.0.102. - Finally, the

test-bgp-router-uiservice is a little UI that shows us what routers are thinking.

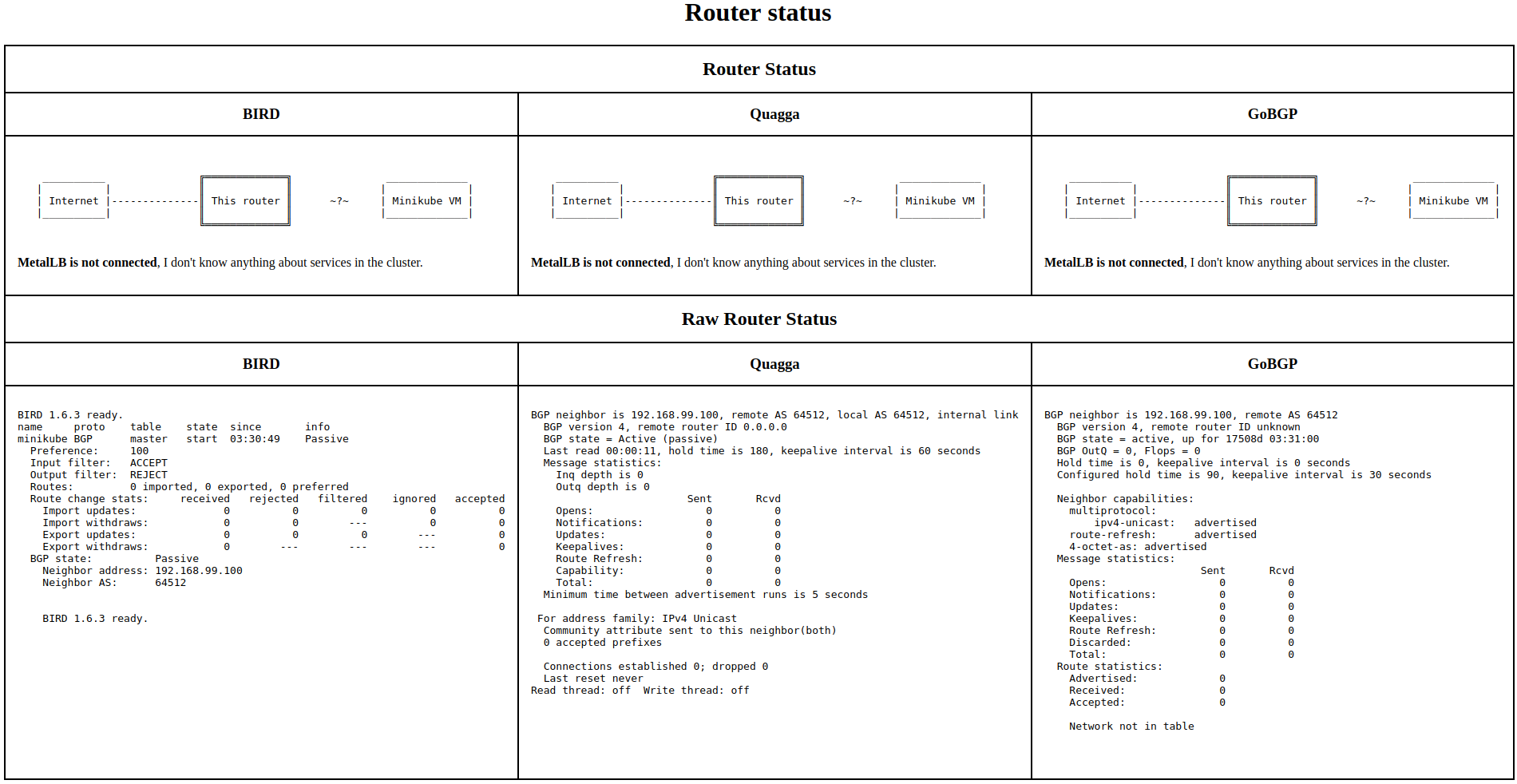

Let’s open that UI now. Run: minikube service -n metallb-system

test-bgp-router-ui. This will open a new browser tab that should look

something like this:

If you’re comfortable with BGP and networking, the raw router status may be interesting. If you’re not, don’t worry, the important part is above: our routers are running, but know nothing about our Kubernetes cluster, because MetalLB is not connected.

Obviously, MetalLB isn’t connected to our routers, it’s not installed yet! Let’s address that. Keep the test-bgp-router-ui tab open, we’ll come back to it shortly.

Install MetalLB

MetalLB runs in two parts: a cluster-wide controller, and a per-machine BGP speaker. Since Minikube is a Kubernetes cluster with a single VM, we’ll end up with the controller and one BGP speaker.

Install MetalLB by applying the manifest:

kubectl apply -f https://raw.githubusercontent.com/google/metallb/v0.3.1/manifests/metallb.yaml

This manifest creates a bunch of resources. Most of them are related to access control, so that MetalLB can read and write the Kubernetes objects it needs to do its job.

Ignore those bits for now, the two pieces of interest are the

“controller” deployment, and the “speaker” daemonset. Wait for

these to start by monitoring kubectl get pods -n

metallb-system. Eventually, you should see two running pods, in

addition to the BGP router from the previous step (again, the pod name

suffixes will be different on your cluster).

NAME READY STATUS RESTARTS AGE

speaker-5x8rf 1/1 Running 0 2m

controller-65c85447-8tngv 1/1 Running 0 3m

test-bgp-router-57fb7c798f-xccv8 1/1 Running 0 3m

Refresh the test-bgp-router-ui tab from earlier, and… It’s the same! MetalLB is still not connected, and our routers still know nothing about cluster services.

That’s because the MetalLB installation manifest doesn’t come with a configuration, so both the controller and BGP speaker are sitting idle, waiting to be told what they should do. Let’s fix that!

Configure MetalLB

We have a sample MetalLB configuration in

manifests/tutorial-1.yaml. Let’s take a look at it before applying

it:

apiVersion: v1

kind: ConfigMap

metadata:

namespace: metallb-system

name: config

data:

config: |

peers:

- my-asn: 64512

peer-asn: 64512

peer-address: 10.96.0.100

- my-asn: 64512

peer-asn: 64512

peer-address: 10.96.0.101

address-pools:

- name: my-ip-space

protocol: bgp

cidr:

- 198.51.100.0/24

MetalLB’s configuration is a standard Kubernetes ConfigMap,

config under the metallb-system namespace. It contains two

pieces of information: who MetalLB should talk to, and what IP

addresses it’s allowed to hand out.

In this configuration, we’re setting up a BGP peering with

10.96.0.100, 10.96.0.101, 10.96.0.102, which are the addresses

of the test-bgp-router-bird, test-bgp-router-quagga and

test-bgp-router-gobgp services respectively. And we’re giving

MetalLB 256 IP addresses to use, from 198.51.100.0 to

198.51.100.255. The final section gives MetalLB some BGP attributes

that it should use when announcing IP addresses to our router.

Apply this configuration now:

kubectl apply -f https://raw.githubusercontent.com/google/metallb/v0.3.1/manifests/tutorial-1.yaml

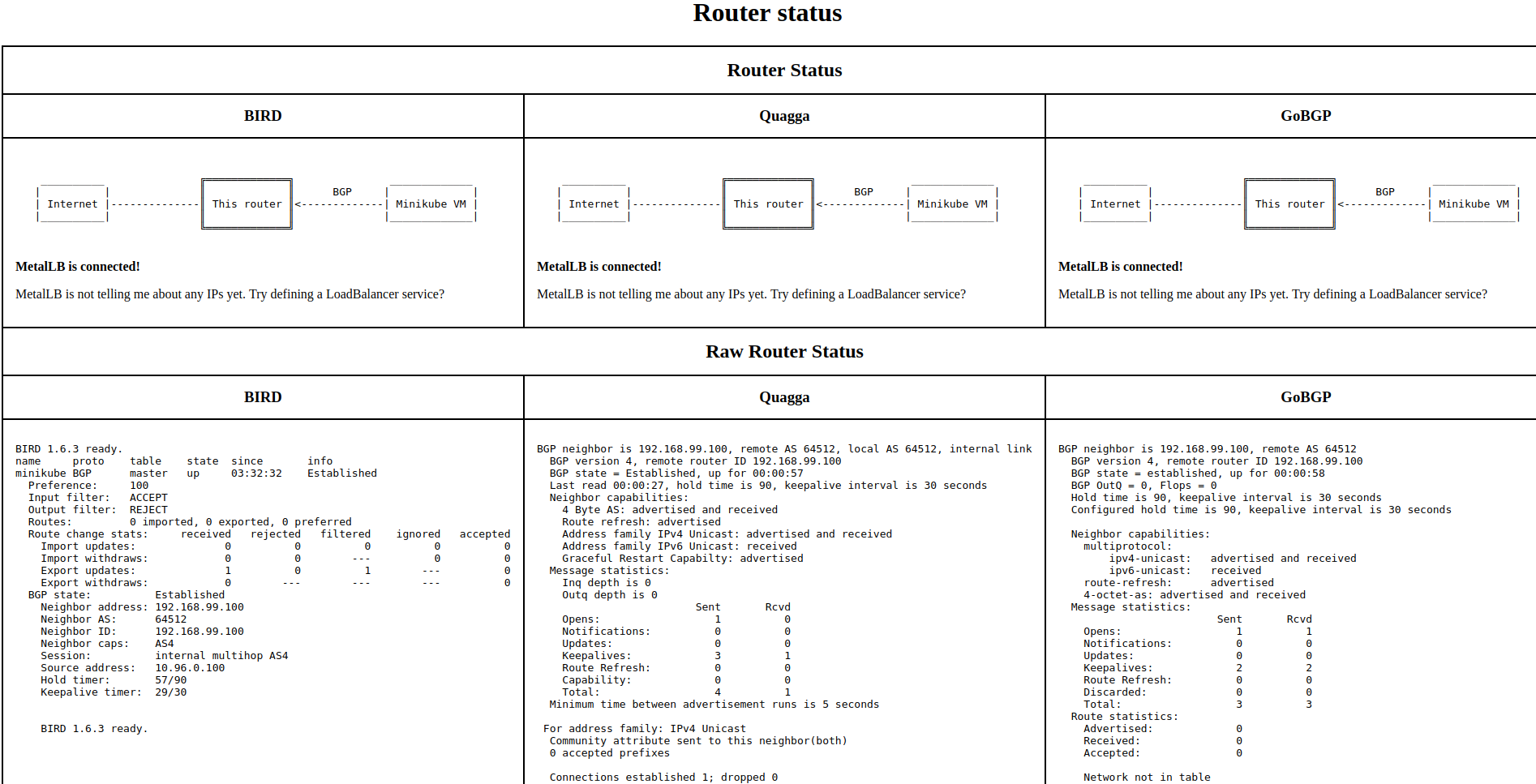

The configuration should take effect within a few seconds. Refresh the

test-bgp-router-ui browser page again (run minikube service -n

metallb-system test-bgp-router-ui if you closed it and need to get it

back). If all went well, you should see happier routers:

Success! The MetalLB BGP speaker connected to our routers. You can

verify this by looking at the logs for the BGP speaker. Run kubectl

logs -n metallb-system -l app=speaker, and among other log

entries, you should find something like:

I1127 08:53:49.118588 1 main.go:203] Start config update

I1127 08:53:49.118705 1 main.go:255] Peer "10.96.0.100" configured, starting BGP session

I1127 08:53:49.118710 1 main.go:255] Peer "10.96.0.101" configured, starting BGP session

I1127 08:53:49.118715 1 main.go:255] Peer "10.96.0.102" configured, starting BGP session

I1127 08:53:49.118729 1 main.go:270] End config update

I1127 08:53:49.170535 1 bgp.go:55] BGP session to "10.96.0.100:179" established

I1127 08:53:49.170932 1 bgp.go:55] BGP session to "10.96.0.101:179" established

I1127 08:53:49.171023 1 bgp.go:55] BGP session to "10.96.0.102:179" established

However, as the BGP routers pointed out, MetalLB is connected, but isn’t telling them about any services yet. That’s because all the services we’ve defined so far are internal to the cluster. Let’s change that!

Create a load-balanced service

manifests/tutorial-2.yaml contains a trivial service: an nginx pod,

and a load-balancer service pointing at nginx. Deploy it to the cluster now:

kubectl apply -f https://raw.githubusercontent.com/google/metallb/v0.3.1/manifests/tutorial-2.yaml

Again, wait for nginx to start by monitoring kubectl get pods, until

you see a running nginx pod. It should look something like this:

NAME READY STATUS RESTARTS AGE

nginx-558d677d68-j9x9x 1/1 Running 0 47s

Once it’s running, take a look at the nginx service with kubectl get service nginx:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nginx LoadBalancer 10.96.0.29 198.51.100.0 80:32732/TCP 1m

We have an external IP! Because the service is of type LoadBalancer,

MetalLB took 198.51.100.0 from the address pool we configured, and

assigned it to the nginx service. You can see this even more clearly

by looking at the event history for the service, with kubectl

describe service nginx:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal IPAllocated 24m metallb-controller Assigned IP "198.51.100.0"

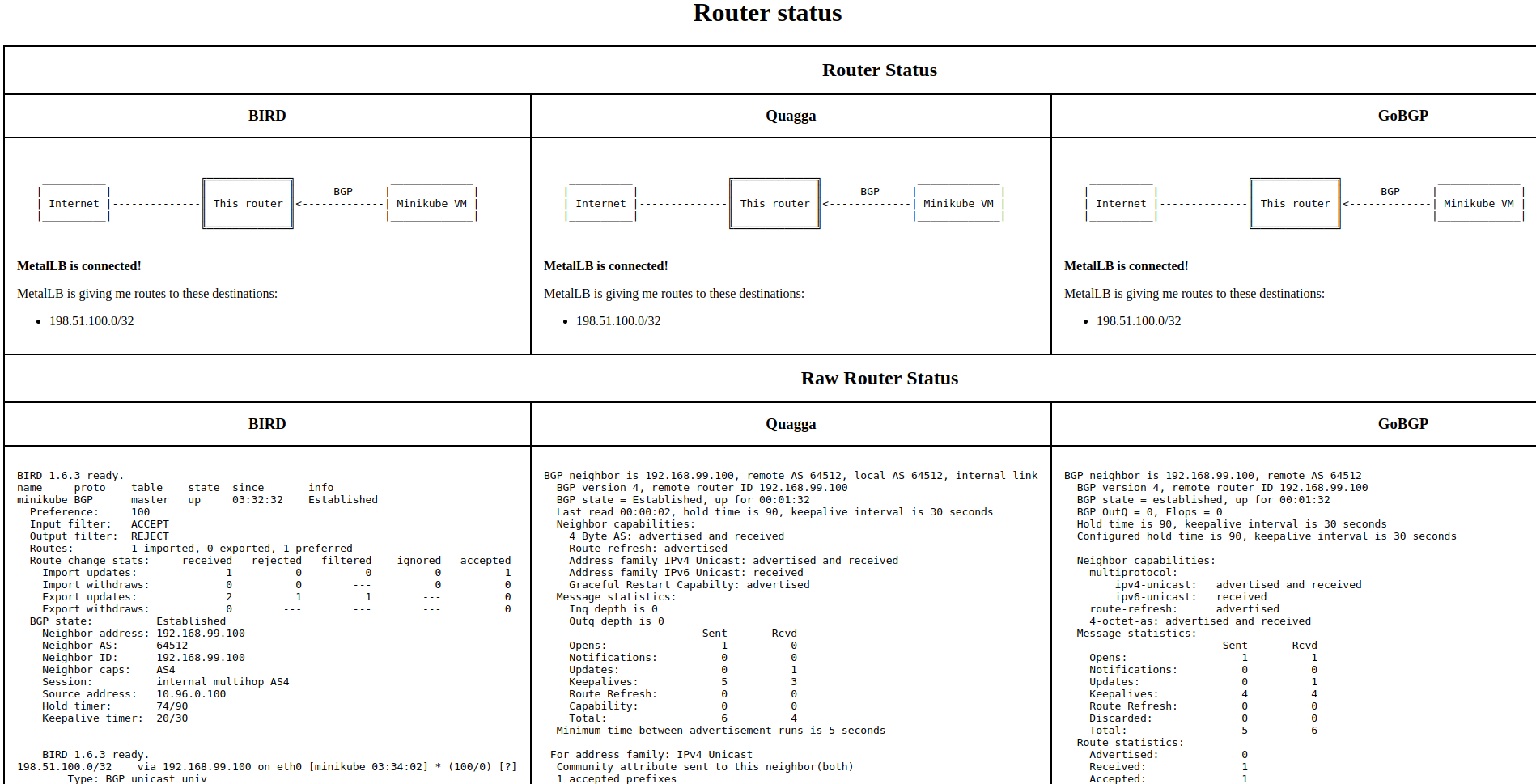

Refresh your test-bgp-router-ui page, and see what our routers thinks:

Success! MetalLB told our routers that 198.51.100.0 exists on our Minikube VM, and that the routers should forward any traffic for that IP to us.

Edit MetalLB’s configuration

In the previous step, MetalLB assigned the address 198.51.100.0, the

first address in the pool we gave it. That IP address is perfectly

valid, but some old and buggy wifi routers mistakenly think it isn’t,

because it ends in .0.

As it turns out, one of our customers called and complained of this

exact problem. Fortunately, MetalLB has a configuration option to

address this. Take a look at the configuration in

manifests/tutorial-3.yaml:

apiVersion: v1

kind: ConfigMap

metadata:

namespace: metallb-system

name: config

data:

config: |

peers:

- my-asn: 64512

peer-asn: 64512

peer-address: 10.96.0.100

- my-asn: 64512

peer-asn: 64512

peer-address: 10.96.0.101

address-pools:

- name: my-ip-space

protocol: bgp

avoid-buggy-ips: true

cidr:

- 198.51.100.0/24

There’s just one change compared to our previous configuration: in the

address pool configuration, we added avoid-buggy-ips: true. This

tells MetalLB that IP addresses ending in .0 or .255 should not be

assigned.

Sounds easy enough, let’s apply that configuration:

kubectl apply -f https://raw.githubusercontent.com/google/metallb/v0.3.1/manifests/tutorial-3.yaml

Refresh the test-bgp-router-ui page and… Hmm, strange, our routers

are still being told to use 198.51.100.0, even though we just told

MetalLB that this address should not be used. What happened?

To answer that, let’s inspect the running configuration in Kubernetes,

by running kubectl describe configmap -n metallb-system

config. At the bottom of the output, you should see an event

log that looks like this:

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning InvalidConfig 29s metallb-controller configuration rejected: new config not compatible with assigned IPs: service "default/nginx" cannot own "198.51.100.0" under new config

Warning InvalidConfig 29s metallb-speaker configuration rejected: new config not compatible with assigned IPs: service "default/nginx" cannot own "198.51.100.0" under new config

Oops! Both the controller and the BGP speaker rejected our new configuration, because it would break an already existing service. This illustrates an important policy that MetalLB tries to follow: applying new configurations should not break existing services.

(You might ask why we were able to apply an invalid configuration at all. Good question! This is a missing feature of MetalLB. In future, MetalLB will validate new configurations when they are submitted by kubectl, and make Kubernetes refuse unsafe configurations. But for now, it will merely complain after the fact, and ignore the new configuration.)

At this point, MetalLB is still running on the previous configuration, the one that allows nginx to use the IP it currently has. If this were a production cluster with Prometheus monitoring, we would be getting an alert now, warning us that the configmap written to the cluster is not compatible with the cluster’s running state.

Okay, so how do we fix this? We need to explicitly change the

configuration of the nginx service to be compatible with the new

configuration. To do this, run kubectl edit service nginx, and in

the spec section add: loadBalancerIP: 198.51.100.1.

Save the change, and run kubectl describe service nginx again. You

should see an IPAllocated event showing that MetalLB changed the

service’s assigned address as instructed.

Now, the new configuration that we tried to apply is valid, because

nothing is using the .0 address any more. Let’s reapply it, so that

MetalLB reloads again:

kubectl apply -f https://raw.githubusercontent.com/google/metallb/v0.3.1/manifests/tutorial-4.yaml

You may have noticed that we applied tutorial-4.yaml, not the tutorial-3.yaml from before. This is another rough edge in the current version of MetalLB: when we submitted the configuration in tutorial-3.yaml, MetalLB looked at it and rejected it, but will not look at it again to see if it has become valid. To make MetalLB examine the configuration again, we need to make some cosmetic change to the config, so that Kubernetes notifies MetalLB that there is a new configuration to load. tutorial-4.yaml just adds a no-op comment to the configuration to make Kubernetes signal MetalLB.

This piece of clunkiness will also go away when MetalLB learns to validate new configurations before accepting the submission from kubectl.

This time, MetalLB accepts the new configuration, and everything is

happy once again. And, refreshing test-bgp-router-ui, we see that the

routers did indeed see the change from .0 to .1.

One final bit of clunkiness: right now, you need to inspect metallb’s logs to see that a new configuration was successfully loaded. Once MetalLB only allows valid configurations to be submitted, this clunkiness will also go away.

Teardown

If you’re not using the minikube cluster for anything else, you can

clean up simply by running minikube delete. If you want to do a more

targeted cleanup, you can delete just the things we created in this

tutorial with:

kubectl delete -f https://raw.githubusercontent.com/google/metallb/v0.3.1/manifests/tutorial-2.yaml,https://raw.githubusercontent.com/google/metallb/v0.3.1/manifests/test-bgp-router.yaml,https://raw.githubusercontent.com/google/metallb/v0.3.1/manifests/metallb.yaml

This will tear down all of MetalLB, as well as our test BGP routers and the nginx load-balanced service.